How StackAware uses agentic AI to manage AI risk

The analyst who never sleeps, calls in sick, or misses when a vendor changes terms & conditions.

StackAware’s agentic risk assessment capability is the analyst who never:

Sleeps

Calls in sick

Misses when a vendor changes terms & conditions

Our customers now get continuous monitoring of known AI assets, including:

Alerts to newly identified risks

Human-in-the-loop review for material changes

ISO 42001 complaint model & system assessments

And updates can be pushed to:

Spreadsheet risk registers

Our API and MCP server

GRC platforms

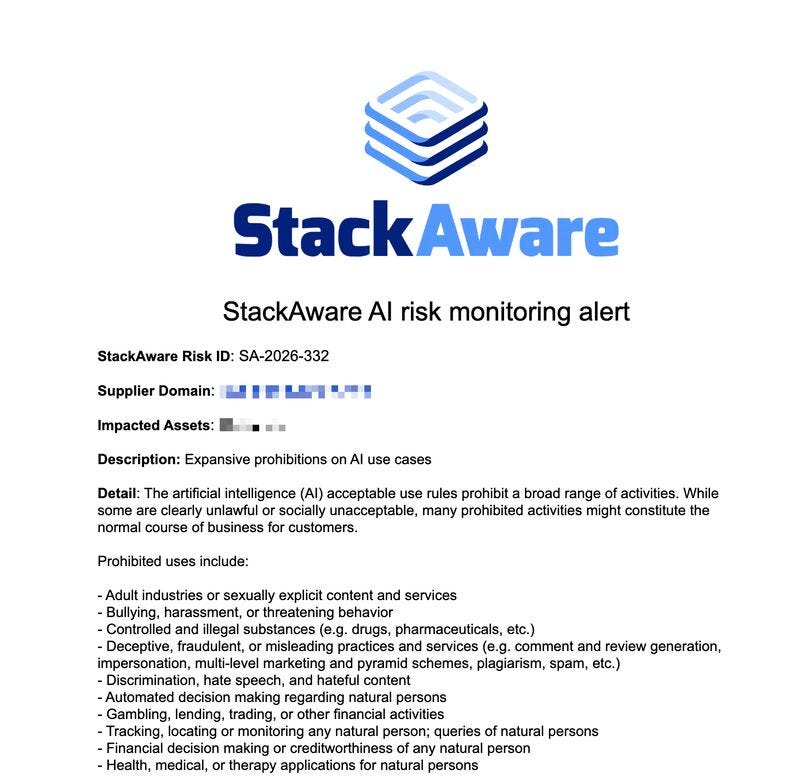

Or sent via email, like in the below example:

Here’s what we learned building the feature:

Step 1: Verify freshness

Every risk datapoint we track is tied to a source (usually a website).

So instead of re-reviewing everything constantly, we:

Hash the source at time of ingestion

Re-check the hash on a schedule

If the hash hasn’t changed:

The underlying information hasn’t changed

We auto-update the record and move on

No wasted effort.

(and no AI used).

Step 2: Detect change — but don’t overreact

If the hash changes, something one the site did too.

But not everything matters.

So we call an AI agent to evaluate:

Is this a real policy / behavior change?

Or just formatting, spelling, layout noise?

If it’s not material:

System records the update

No human needed

Step 3: Assume the AI will miss things

Even when AI says “no change,” we still:

Randomly sample ~10%

Send to human review

This gives us a continuous quality check.

Not trust. Verification.

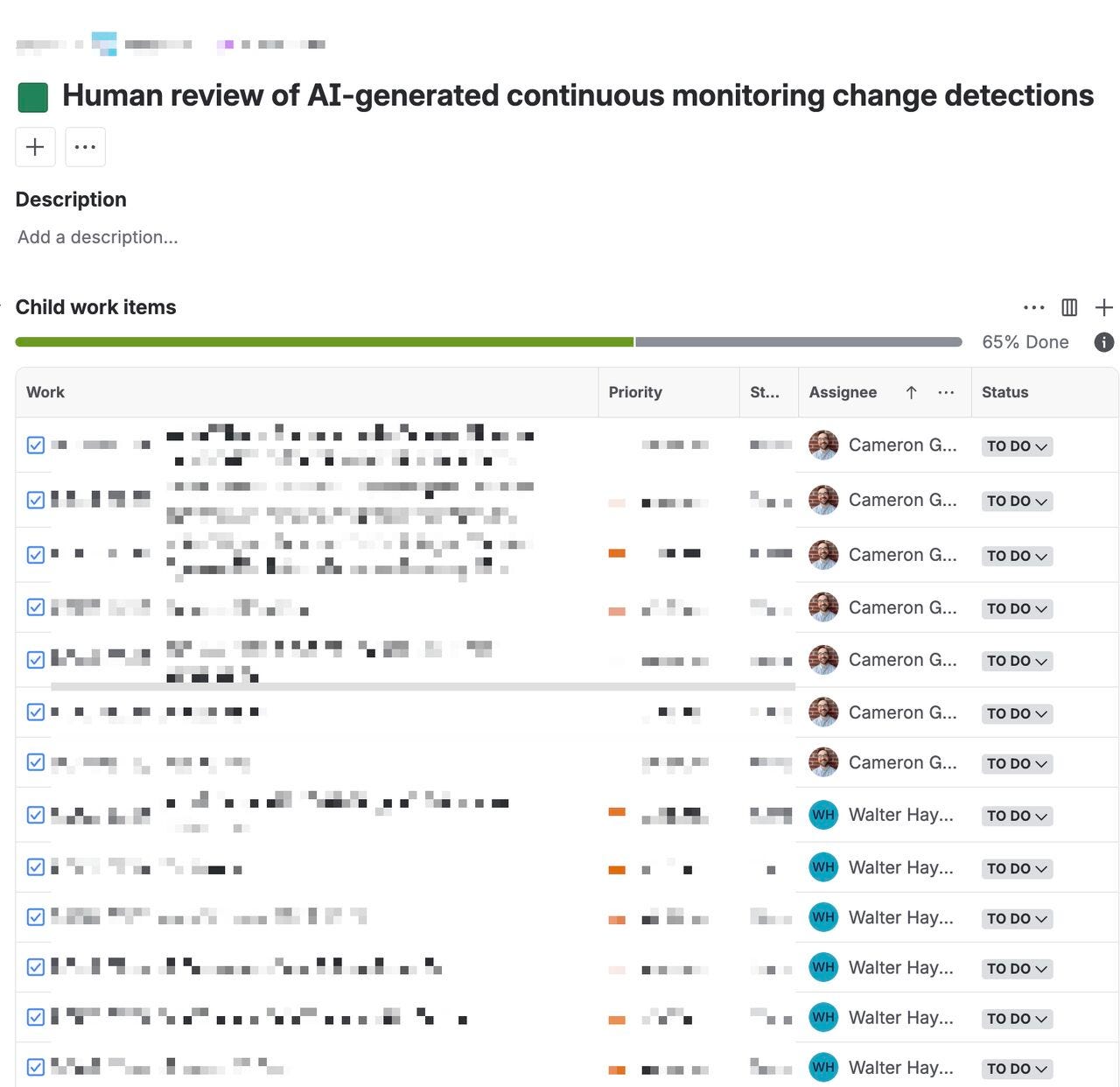

Here’s an example:

Step 4: Escalate what matters

If the AI flags a material change:

It goes to a human

He confirms or denies the change assessment

If needed, provides analysis + recommended update

Humans focus on signal, not noise.

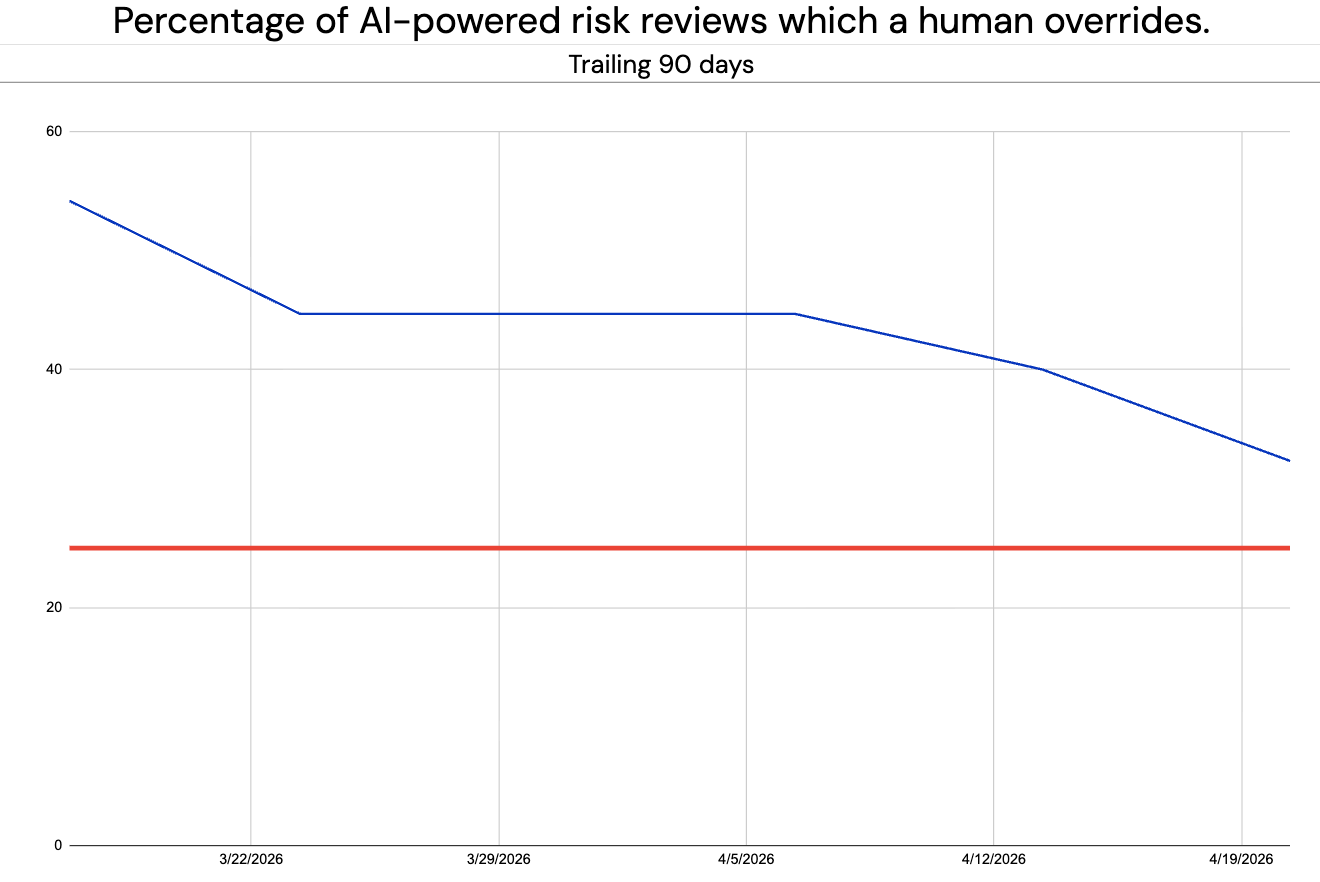

Step 5: Measure the system itself

This is the part most teams skip.

We track:

AI vs human agreement rates

Override frequency

Drift over time

Why?

Because under ISO 42001, your oversight system is itself a system that needs monitoring.

Performance has been steadily improving as we tweak the system prompt and other variables. In fact, we are close to our target override rate of <25%.

What this unlocked

We didn’t just automate continuous risk assessment.

We made it:

Auditable

Defensible

Measurable

without losing control.

Most teams worry AI governance means slowing down.

When done well, it’s the opposite.

Need to automate your AI risk management?